This is a lightly edited and updated version of an invited talk I gave at the DHSI Summer Institute in 2021. It is based on sustainability work we’ve been doing at the Roy Rosenzweig Center for History and New Media (RRCHNM) and while I’m leading the charge, I want to start by acknowledging the contributions of George Mason University (GMU) faculty, staff and graduate students who’ve been integral to this project.

- Megan Brett, Digital History Associate (2019-2022)

- Laura Crossley, Graduate Research Assistant (2019)

- Amanda French, Contractor (2019-2020)

- God’s Will Katchoua, Systems Administrator (2019-2022)

- Andrew Kierig, Digital Publishing Lead, Mason Publishing (2019-2021)

- Joanna Lee, Digital Publishing Lead, Mason Publishing (2022-present)

- Dana Meyer, Graduate Research Assistant (2020)

- Nathan Sleeter, Research Assistant Professor (2019-present)

- Kris Stinson, Graduate Research Assistant (2020)

- Tony Trinh, Systems Administrator (2022-present)

- Misha Vincour, Contractor (2021-present)

The Metaphor

In traditional analog publishing, there is a moment of transference when the final version of a scholarly book or an article is handed off to the publisher. From the scholar’s point of view, the responsibility of sustaining and preserving their published work now falls on an established pipeline of publishers, printers, distributors, wholesalers, retailers, librarians, and archivists. Hard decisions may eventually have to be made about the fate of a scholar’s so-called backlist of previously-published scholarship—publisher may take books that aren’t selling out of print, and librarians may deaccession unused books when they run out of shelf space—but the scholars is largely insulated from these decision-making processes. As far as they’re concerned, their work can and should live forever.

Digital humanities projects break many traditional scholarly workflows, including those around sustaining and preserving scholarship after it is published—or rather, as is often the case, self-published. As scholars acquire longer and longer backlists of previously-published digital scholarship, many of us will need to grapple with the kinds of issues that publishers and librarians are intimately familiar with. In short, we need to become more intentional about what we are saving, why, and for whom. These questions are at the heart of my sustainability work on several hundred projects created at or hosted by RRCHNM since 1994. And it’s that work I’m going to talk about here.

Over the course of this blog post, I’m going to posit a difference between sustaining and preserving digital projects, examine several ways projects can be sustained or preserved, and consider some of the myriad intellectual, practical, and technical factors that can go into making decisions around project sustainability and preservation.

But first, I want to start out with a hypothesis, that we’ve been using the wrong metaphor. DH projects are not books… they’re cars

https://upload.wikimedia.org/wikipedia/commons/8/8f/Antique_car_in_forest.jpg

Expensive, resource-intensive status symbols with a practical purpose often supplemented by all the latest bells and whistles and attempts by creators to push the envelope of what’s possible. And after you make a big initial investment to get one, there’s only gas and nominal registration fees for the license plates, and they drive along fine for five or seven years—just long enough for that extended warranty the dealer convinced you to buy to expire—then start to break down in increasingly dramatic ways that are increasingly expensive to fix. And at some point that car will either become a classic, worthy of preserving at whatever the cost, or it’ll become too expensive to fix and take a one-way trip to the scrapyard.

Now tell me that doesn’t sound like a DH project, from the big initial grant to build it, to the ongoing hosting costs and annual registration fee for the domain, to the 5-7 year timeline before things start to break and some become classics, and some vanish from the internet.

And if we think about them as cars, rather than books, it can help us begin to readjust our and others’ ideas about how long they should survive and how we might care for them, transform them, and maybe eventually shut them down.

No matter what metaphor we use, DH project creators are generally responsible for the sustainability and preservation of our digital objects, which means we are faced with maintenance and end of life decisions we don’t regularly encounter in physical book publishing situations. Most of us are digital hoarders—we want to keep it all!—but we have to put on the hat of publishers, librarians, and car owners to make hard decisions about where and how to allocate our scarce resources of time and money. This post isn’t going to give you all the answers about how to do that, but it is going to go through how we’ve been approaching this problem at RRCHNM.

The Problem

When I joined RRCHNM in 2018, there was no one looking out for our digital backlist of center projects. By 2019, we had finished a complete turnover of previous directors and systems administrators and our servers had become a repository of orphaned projects and junk:

- projects by former center directors and GMU faculty

- old course websites for GMU faculty dating back to the 1990s

- “friend of Roy” projects, hosted for non-GMU scholars who couldn’t find hosting elsewhere in the early 2000s

- projects that never got off the ground

- incomplete prototypes for finished contracts

- discontinued wikis

- random files not connected to any known project

In short, it was not dissimilar to the kinds of junk I find lurking around in the corners of my hard drive when I’m running out of space and looking to delete some old files.

This matters because servers are hacked through old projects. It matters because we have no ability to update or access many old projects. And it matters because when our servers go down or we’re decommissioning servers, we can’t get sites back up again and may not even know the sites existed in the first place.

The Plan

At this point, I did what any good historian-turned-administrator would do… I made a policy, which turned into a workflow, which turned into a tracking spreadsheet. We had over 300 projects on our servers—I say “over” because I don’t know the exact number, we still keep finding ones here or there as we work our way through shutting down and migrating projects to new servers when servers hit end of life and of course new projects spin up almost as fast as we shut old ones down. Speaking of which, I am inserting a plea here to USE SUBDOMAINS NOT SUBFOLDERS because almost all the lost projects and other discoverability issues happen because projects ended up in subfolders of other projects.

Over 200 of the projects we found were flagged in our sustainability triage as needing attention, ranging from projects created in the 1990s to projects just finishing this year (2021). Some were obviously broken. Some looked great until we realized they were running Omeka 0.10beta from 2008. Many had security vulnerabilities or were constantly under attack by hackers (insert obligatory thanks to our systems administrators God’s Will Katchoua and now Tony Trinh who keep us up and running!). While web-based projects are not the be-all, end-all of DH, it is the triage of these projects that I’m going to focus on here.

The core of the policy we generated is recognizing our responsibilities:

- to our audiences: people who use and rely on projects, especially K-12 teachers who have incorporated our projects into their curriculum

- to our fellow scholars: people who have entrusted their scholarship to us to publish, and who use the projects because they recognize the projects’ intellectual merit and value

- to future scholars: people who study history of the DH field

- I’m not as worried about “digital dark ages” as some folks may be, as I’m a pre-modern historian and know how much can be done with limited sources! but that doesn’t absolve us of the obligation to save stuff now, it just means it’ll be okay when some or even many of those efforts inevitably fail

- to our funders: people we made promises to when they gave us money to create projects

- many of those promises turned out to be unrealistic, given what we now know about the longevity of digital projects, but we still have an obligation to fulfill them to the best of our ability

After that, the central part of our workflow is figuring out which pieces of a project are important:

- the data (always)

- the metadata (yes)

- scholarly apparatus around it, if extant (yes)

- implicit arguments made by design and interface (some)

- opinions differ but I come down on the side of “interface = argument” and so the interface should be saved where possible

- code (every once in a while)

- bespoke interfaces are interesting; the core installation of WordPress 5.7 not so much

- project documentation (in small quantities)

- we don’t need every email from the project team but we do need some documentation to understand the purpose and history of projects

Given those responsibilities and our assessment of a project’s importance, the purpose of our tracking spreadsheet is then 1) figuring out what to do with each project and 2) keeping track of them all while we work through the backlist.

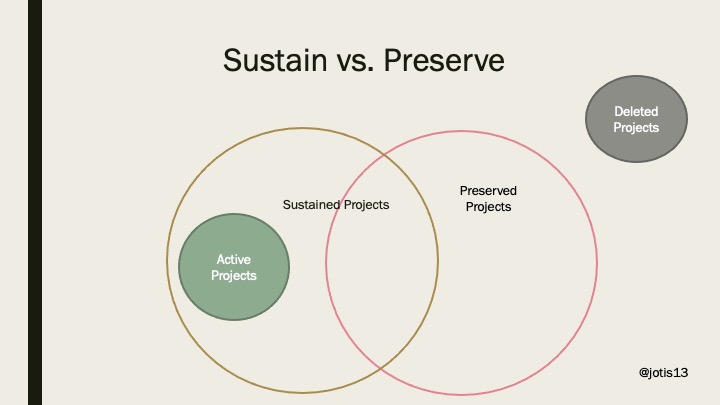

Rubber Meets the Road

The first decision we had to make for each project was whether to sustain it or preserve it. Lots of people mean lots of different things by terms, but I use them as “keep running” vs. “keep bits.” A sustained site is one where I can go to the URL and have content sent to my browser and it doesn’t matter to me what is going on under the hood. To visit a preserved site, I may have to jump through more hoops to get to and it may not be in easily readable format anymore, but all the content or code there. To use a book analogy: a sustained site is one that’s on open shelves in the library while a preserved site is one that is in the library’s special collections vault with limited access. To use the car analogy: a sustained car can take you to the grocery store while a preserved car might be up on cinder blocks without its tires.

The 9/11 Digital Archive is a sustained DH project. We’ve moved it from custom code in 2002 to early Omeka versions to the latest versions of Omeka over the years. The data is still there but under the hood looks totally different. World History Commons is also a sustained DH project. The content was migrated from various previous projects created from 1990s onward. Those previous projects were or are in the process of being either shut down or flattened (more on that in a moment) now that their content is searchable in the new interface.

By contrast the ECHO project from early 00s—Exploring and Collecting Archives Online, Science, Technology, and Industry—is a preserved DH project. You can see it in the Wayback Machine and you can find the files in GMU’s institutional repository, but it no longer lives on the RRCHNM servers.

So how do we decide what should be sustained vs. preserved? We have a list of questions we ask ourselves that range from the practical to the scholarly:

- whose project is it? does its site belong on our servers or is there somewhere else it can/should be hosted?

- is the site being actively updated?

- how many people are using the site? how often are they using it?

- is this a prestigious project that is often quoted/referenced, regardless of site traffic?

- how hard is it going to be to keep the underlying code updated? can we do it in-house or do we have to pay contractors? how long will the update last?

- is the site an immediate security risk?

- what are the intellectual merits of the overall project?

At the end of the day, our resources are finite and we prioritize our own projects over those that don’t belong to us, especially as places like Reclaim Hosting now make it much easier to self-host a scholarly digital project than 20 years ago. While we do make some exceptions to this rule—primarily for K-12 projects or local historical societies whose sites can be flattened (yes, yes, I will get back to that!) and thus require minimal resources to sustain on our part.

If a site doesn’t belong on our servers, if no one is using it, and if no one on the current RRCHNM team believes in its intellectual merits and wants to take responsibility for it, then the project is transferred to its proper owners and/or preserved.

Otherwise, we are talking about some form of sustainability, depending on the cost, difficulty, and security issues associated with each project.

(Note: I’m excluding ongoing projects from this discussion, because if a project is still being actively developed, it is being sustained as a matter of course.)

Sustainability in Practice

Sustainability generally means one of three things for us: recoding, flattening, and/or reinventing.

First, it can mean actually putting in the time and effort to recode the project as needed. For example, the Google API transitioning behind a paywall broke our Hurricane Digital Memory Bank and Histories of the National Mall projects, which had to be recoded to use Leaflet and Open Street Maps. Obligatory shout-out to Megan Brett and Jim Safley who did most of this work!

However, I want to be SUPER CLEAR that this requires a lot of work and costs money. Drupal 7 and Drupal 8 being depreciated is costing us tens of thousands dollars to get all our Drupal websites updated. And then in another couple of years, we’ll have to update our sites all over again. For some of these sites, updating Drupal or moving to a different content management system (which will also eventually have to be updated) is our only option. They need to have user log-ins and all the other accoutrements of a Drupal website.

For others, though, we can move to our second type of sustainability: flattening the sites (see, I promised we’d get there). Not every project needs to stay online with its original codebase, especially since databases are a security risk and high maintenance. For some sites, the important thing is its content. Anyone who wants to study the history of WordPress site customizations can look at the general codebase—we don’t need to save a version of WordPress 4.8.1 with no plug-ins and built-in theme for posterity, just the contents of the site. So we use wget to flatten our transform our sites to static HTML, CSS, and JavaScript (no database functionality). Ideally we also remove external dependencies as much as possible, though link rot—external links that go to no-longer-extant sites—will always be a problem.

An example of a flattened site is RRCHNM’s first podcast, Digital Campus TV. We used wget to generate a static version of the site, fixed up the HTML and put it online. (I give myself a shout-out for that one.) This is our preferred solution for sustainability, because it requires almost no upkeep to sustain these sites. In 10 or 50 or 100 years, browsers will still be able to read HTML or if there is such a major overhaul in the structures of the web that there is no backwards compatibility to HTML then there will be a straightforward upgrade path from HTML to UtopiaML that we take once for all our projects which will then be good for another century or two.

Our last type of sustainability is reinvention. The aforementioned World History Commons combines the content from eight previous RRCHNM websites in to a single “commons.” This is the kind of project that we get external funding for and so is the exception rather than the rule.

There are other ways to sustain sites, such as containerization and emulation, but I’m going to be really honest here: that takes time and money, and the containers and emulators themselves have to be sustained. Flat HTML, CSS, and JavaScript is about the easiest thing to keep going on the internet. Websites we created in the 1990s are in better shape than the ones we created in the early 2000s because they were HTML and didn’t involve PHP, databases, or content management systems. Even the dreaded Flash is easier to deal with than a severely out of date content management system. If you have one or two really complex digital objects you want to save… sure, go for emulation. I am always up for a trip down memory lane by starting up Dos-Box on the Internet Archive and playing the Oregon Trail, the game which led some people to call people my age “the Oregon Trail Generation.” But if you’re trying to save a longer digital backlist of a dozen or a hundred or hundreds of projects, it’s going to be time consuming. Y’all have been warned.

Instead, I think we’re better off following the example of the Endings Project team, and focusing on creating add-ons to make flattened sites more functional for people used to dynamically generated content, for example a search function that can be deployed on static websites, so there’s no need for an underlying database to search the site.

Preservation in Practice

Okay, I want to turn now to preservation, which I’ve argued is separate from sustainability. This is for when you have a cool, custom coded website from the 00s that no one visits anymore or a course websites from the 1990s might be of interest to future historians of education and don’t want to get rid of it, but it also doesn’t need to be sitting on our servers. At RRCHNM, we preserve digital projects by sending them to Mars.

(I just can’t resist making that joke all the time.) We send them to MARS: Mason Archival Repository Service, or GMU Libraries’ D-Space Repository.

Here’s where I give a shout-out to the amazing Andrew Kierig and now Joanna Lee, who have worked with us to figure out a way for the libraries to support preservation of complex digital objects in a way that didn’t just off-source all our technical debt onto them, but rather fit into their existing technical infrastructure and actually used resources in a way that helped them justify having that infrastructure.

An aside: don’t try to foist your DH websites off onto librarians. Not unless you and they both agree that’s the path forward from even before you start the grant application and they get paid for their troubles from grant funds.

When we use wget to create static versions of the site, we also create a WARC—Web ARChive file. We then zip up the project website and upload it, the warc file, screenshots (in TIFF, because those aren’t lossy, but sometimes also in JPEG because annoyingly the repository can show image previews of JPEG and PNG but not TIFF files which have to be downloaded to be viewed), associated documentation, and of course metadata to the GMU institutional repository.

This, by the way, for any critical code scholars who’ve been pulling their hair out at my casual disregard for the original code bases of our projects. This is where we finally do save original code bases. Our system’s administrator God’s Will (and now Tony) performs code dumps for custom built sites, where part of the intellectual value of the site is what did to create them and those are also zipped up and uploaded to MARS.

You can see here a list of our project metadata, which lead to many of the questions we wrangled with while uploaded the metadata:

- who is an author? PI? PM? coPI? advisors? content creators? coders? people who’ve maintained the site?

- what is the title of the project? the site URL? something else?

- what is the date of the project? when there’s a date range, we can use the last published date but what if we don’t know when the site was last touched?

- what does it mean to publish a digital project? RRCHNM is the (self-)publisher

- type and keywords need to be project specific

- information on sponsors is crucial

- abstract describing the project is what people see when the visit the project page in MARS

Note there’s a line here about items not deleted from servers… we preserve sites that we are no longer updating even as we continue to sustain them, because there may come a day when we no longer are going to sustain them. By preserving them as soon as we’re done updating them, we get them in as close as possible to original—and fully functional—condition

Speaking of which: if you only take one thing away from this blog today, whether you want to sustain or preserve your project, DECIDE WHAT TO DO AS SOON AS POSSIBLE, AND DO IT AS SOON AS POSSIBLE. Do it even before project starts, if you can, but any time before the end of active development can work.

After active development ends, and there’s no money to pay for people to work on the project, things get harder to fix. Once supporting files go missing and code breaks, it’s harder to fix. Once the PI leaves the uni and you don’t know who to talk to about what the site is supposed to do and look like, it’s harder to fix. Once the entire project team has left the uni and you don’t know what promises were made to funders or outside organizations, it’s harder to fix.

If you need help with these conversations and decisions, Alison Langmead and the folks at Pitt have created an excellent series of resources to help you work your way through this: the Socio-Technical Sustainability Roadmap.

If you go into MARS, you’ll be able to see what a “preserved” digital project looks like for us. It’s basically some metadata and a bunch of file downloads, but anyone can download those files and see what the website looked like or spin up a local copy of the flattened site on their computer to explore or in some cases dig into the original code base to see how it was made. This is obviously a world away from a sustained project, but it’s not just deleted off the servers without making a publicly-accessible backup somewhere else first.

This isn’t to say we don’t just delete things sometimes—there are some pieces of a project, prototypes, ideas that don’t go anywhere, dev sites for projects no longer under development, and so forth—that don’t need to be sustained or preserved. Ephemerality can also be a deliberate choice and sometimes a valuable one, as anyone who’s chosen not to record a classroom Zoom discussion knows full well.

Conclusions

To get back to the big picture and if I may be forgiven getting mathematical and drawing a Venn diagram…

You will have to decide own criteria for what goes into which circle. Our criteria are very much tailored for historians building websites for the purpose of democratizing history and making historical sources, methods, and arguments available beyond the ivory tower. Someone making digital art may feel very differently.

But whatever your criteria are, you need to borrow at least a little of the mindset of car owners or—to go back to the tried and true metaphor—of publishers and librarians who have scarce resources with which to publish or preserve a piece of scholarship.

We, as DHers, have scarce resources with which to sustain and preserve our digital scholarship. Most of us are doing it ourselves and don’t have unlimited time or money, especially with the “publish or perish” mentality of academia where you can’t just do one project and say that’s it, you’re good for the rest of your career. We accrue projects and then we need to make decisions about how to managed our backlist of previous projects.

Some suggested questions to help guide you:

- what are you saving?

- BE THOUGHTFUL ABOUT THE PIECES OF YOUR PROJECT

- why are you saving it? who is going to want it?

- BE REALISTIC ABOUT YOUR AUDIENCE!

- how are you saving it?

- ONCE YOU KNOW YOUR AUDIENCE, YOU KNOW HOW THEY NEED TO ACCESS IT

- what resources do you have and what do you need to prioritize?

- WE ALL LIVE IN THE REAL WORLD

- how much can you “future-proof”?

- THE LESS WORK YOU HAVE TO DO IN THE FUTURE, THE MORE YOU CAN SAVE

I want to end once again by saying thanks to everyone at (or formerly at) RRCHNM who has helped on this gargantuan project! It’s easy to see me writing about this work and think I’ve done it all myself, when it wouldn’t have been possible without the rest of the RRCHNM sustainability team.